- Jul 14, 2021

- 18 minutes

-

Dr Marcin Pietrzyk

Dr Marcin Pietrzyk -

Michal Rachtan

Michal Rachtan -

Kamil Zalewski

Kamil Zalewski

Outline

- Intro

- Business Benefits & Value

- Business Case Studies

A. Internal Audit & Internal Fraud detection (Audit)

B. CRO Trader Supervision

C. Single Client View (CRO) - Key Elements for Success

- Data Foundations

A. Data Lake

B. Entity Resolution - Call to Action

Intro

In the wake of the 2008 financial crisis, financial regulators around the world sought to standardize risk mitigation frameworks, significantly increase fines for non-compliant firms, and increase the amount of accountability that firms require from personnel.

These trends have put unprecedented pressure on Chief Compliance Officers and Chief Risk Officers, who are tasked with a number of compliance-related goals, including internal fraud detection, trader supervision, and implementing single-client view (SCV) capabilities in order to comply with anti money laundering directives.

It’s no secret that the hype surrounding artificial intelligence has, in most cases, far outpaced the capabilities that artificial intelligence firms are able to deliver.

According to a recent survey conducted by Deloitte, more than two-thirds of financial services executives are not actively deploying AI solutions at their firms, and over 10% have not begun any AI initiatives whatsoever (1). That means that risk professionals who can distinguish real capabilities from implausible or oversold technologies will do a better job protecting their companies.

In this white paper, we lay out the state of AI technology as it relates to risk mitigation in financial services. We describe AI’s strengths and weaknesses in common compliance workflows, we share best practices around data hygiene and architecture, and we provide a framework for assessing and selecting AI vendors based on their track records of success.

- https://www2.deloitte.com/uk/en/pages/financial-services/articles/ai-and-risk-management.html

- https://www.bloomberg.com/quicktake/the-london-whale

Value

Quantifying risk or risk mitigation is notoriously difficult. No financial service firm can eliminate compliance risk entirely, so firms always face the risk of regulatory fines and reputation damage, no matter how sophisticated their compliance structures are.

At the same time, firms can set up early warning or alerting systems to shorten their time to the discovery of fraud and accelerate measures for remediation, if necessary.

In cases such as the one of the London Whale, the risks and losses faced by banks were far higher than they would have been if the suspicious activity had been properly logged and actioned early on 2.

For CROs looking to adopt or deploy new AI and analytics technologies, it’s critical to select a key set of metrics that can be tracked before, during, and after the roll-out of your new solution. That helps you demonstrate your progress to executive leadership and identify opportunities for process improvement.

Ultimately, we recommend quantifying and measuring your success in risk management based on the following metrics:

- Risk reduction.

Sum of risks mitigated thanks to solution implemented - Regulatory fines avoided.

Cost avoided thanks to solution implemented - Manual work avoided.

Amount of manual work related to meeting compliance criteria that has been automated

Business Case Studies

The best way to plan and implement a new AI or analytics solution for risk mitigation is to study the successful technology implementations of other firms. While no two financial services firms are alike, the data and operational challenges that firms in the industry face are often similar in scope and complexity.

We detail three case studies on Internal Audit & Internal Fraud Detection (Audit), Trader Supervision, and Single Client View in order to provide a broad view of how AI can be leveraged for risk mitigation.

Case Study 1: Internal Audit & Internal Fraud Detection

One of the most shocking revelations about the long-running Libor scandal was that the bankers who had colluded to fix interbank lending rates had often sent each other emails and texts asking for specific interest rate targets. In other words, the market manipulation scandal that led to banks paying over $9 billion USD in fines could have been identified early on, but only if bank auditors and regulators had known where to look.

That said, it’s not surprising when fraud goes undetected. Every day, bank personnel at large institutions generate tens of thousands of emails, instant messages, and text messages. The traditional form of auditing, a haphazard, ad hoc look at certain teams or functional areas of a bank, tends to be random and reactive. As such, it’s like throwing a dart at a board.

The reason that audits have taken this form is that banks are resource- -constrained. It’s financially and practically unreasonable to have auditors analyze every communication, record, and process that occurs at a bank. Given limited resources, banks and regulators have chosen to spot-check those communications and processes that they are able to, and hope for the best.

That reality is changing because new artificial intelligence technologies enable organizations to analyze massive quantities of communications and process data in near real time. As auditors become more data-driven and technology forward, they are training AI models on existing datasets in order to raise alerts when traders or other bank personnel attempt to manipulate the market.

Moving forward, the financial services firms who will be the most successful are the ones who implement a comprehensive approach to audits that is proactive and targeted, and that combines early warning systems with the types of spot checks with which banks are familiar. From monitoring access permissions (e.g., making sure users are not overprovisioned with administrative privileges) to identifying conflicts of interest (e.g., through link analysis), auditing is increasingly linked to automation and AI.

Still, it isn’t just enough to implement these technologies. Financial services firms also need to test and refine their models over time as bad actors change their patterns of behavior. Ultimately, the success of these audits will be judged on banks’ ability to avoid costly scandals and uncover incidents like the Libor manipulation ring early on.

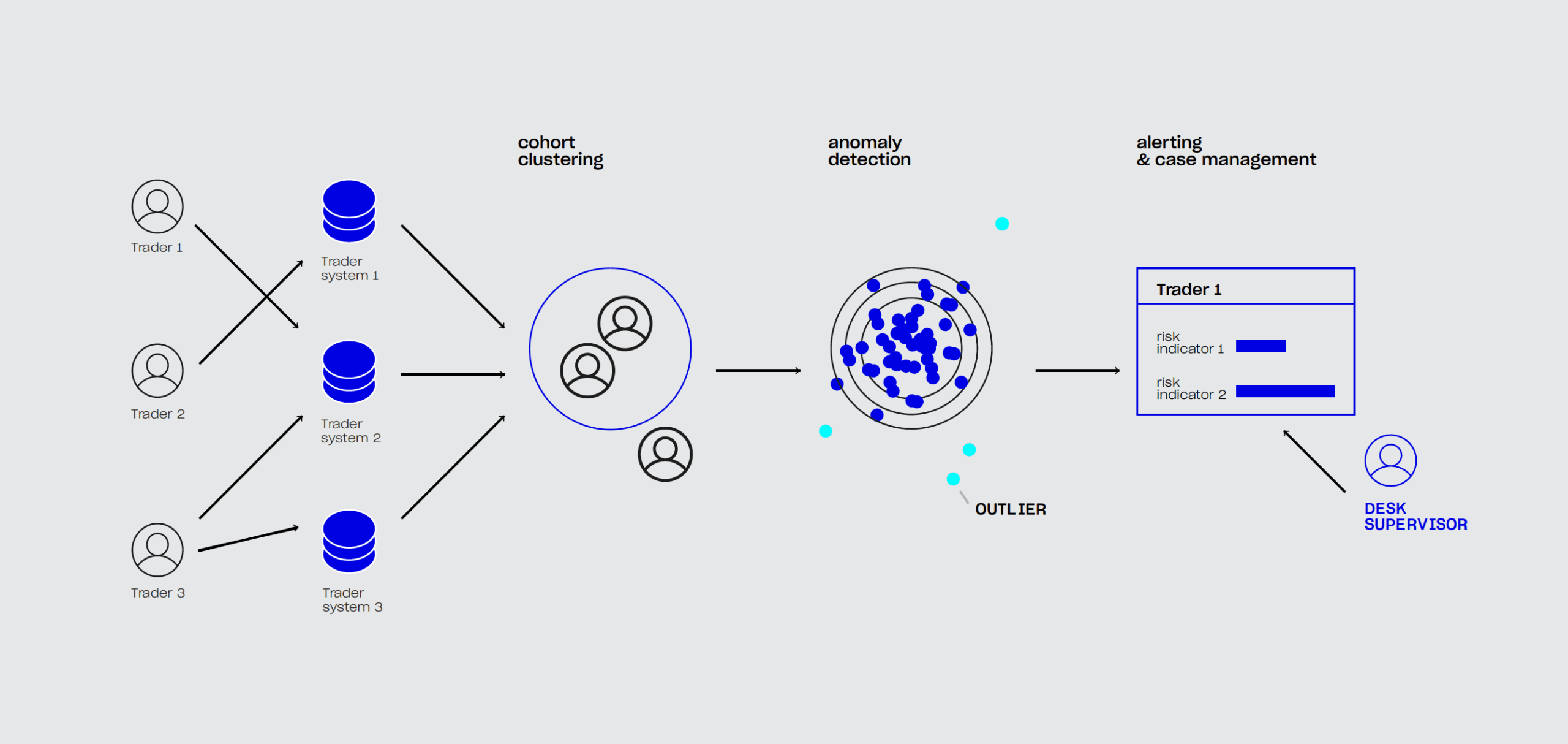

Case Study 2: Trader Supervision

Rogue trading, or fraudulent trades conducted by bank employees, can cost banks billions of dollars. To ensure that banks are not overexposed to liquidity risks, financial regulators require banks to set aside a certain amount of capital in the form of cash reserves. The intent is for these cash reserves to cover any losses that banks could suffer due to rogue trading. In the event that rogue trading occurs, banks will still have the cash they need to cover their margins and continue their normal activities.

In theory, the quantity of cash reserves that banks must maintain is directly correlated with the trading risk they’re exposed to.

It’s not often that a CRO can help generate revenue, rather than being viewed as a cost center, but having the ability to free up liquidity and decrease risk has a direct impact on a bank’s bottom-line.

The problem with reducing risk in a systematic, measurable way is that the data generated by traders is massive and often difficult to integrate into a common system. Each trader generates thousands of individual data points across a number of complex portfolios each week, making it impossible for banks to manually track all trader behavior. While anti-fraud solutions for trader oversight have existed for some time, the advent of AI and machine learning technologies that rely on unsupervised learning methods, means that traders can no longer perpetrate fraudulent trades simply by avoiding the patterns of the past.

That’s because unsupervised AI and ML learning methods can detect anomalies in trader behavior based on that trader’s day-to-day actions. At the same time, because these systems can incorporate signals from across a bank’s trading platforms, they can minimize the quantity of false positives that banks receive.

As with new auditing technologies, the benefit in improved Trader Supervision capabilities is the prevention of operational and regulatory losses traditionally associated with malicious or non-compliant traders at firms.

Case Study 3: Single Client View

After the collapse of Lehman Brothers in 2008, many banks realised that their siloed IT systems left them unable to adequately assess their own risk. The problem was data integration. Even if banks identified a risk in one system, they found it difficult to assess the impact of that risk on other portfolios.

One simple example: highrisk borrowers. In many cases, individuals who held bank accounts with a bank across multiple countries were viewed as multiple individuals. In that type of scenario, a default by that individual in the United States would trigger no action toward that individual in the United Kingdom. This obviously posed a significant risk for banks, who needed to be able to act on all available information as they minimised their financial risk.

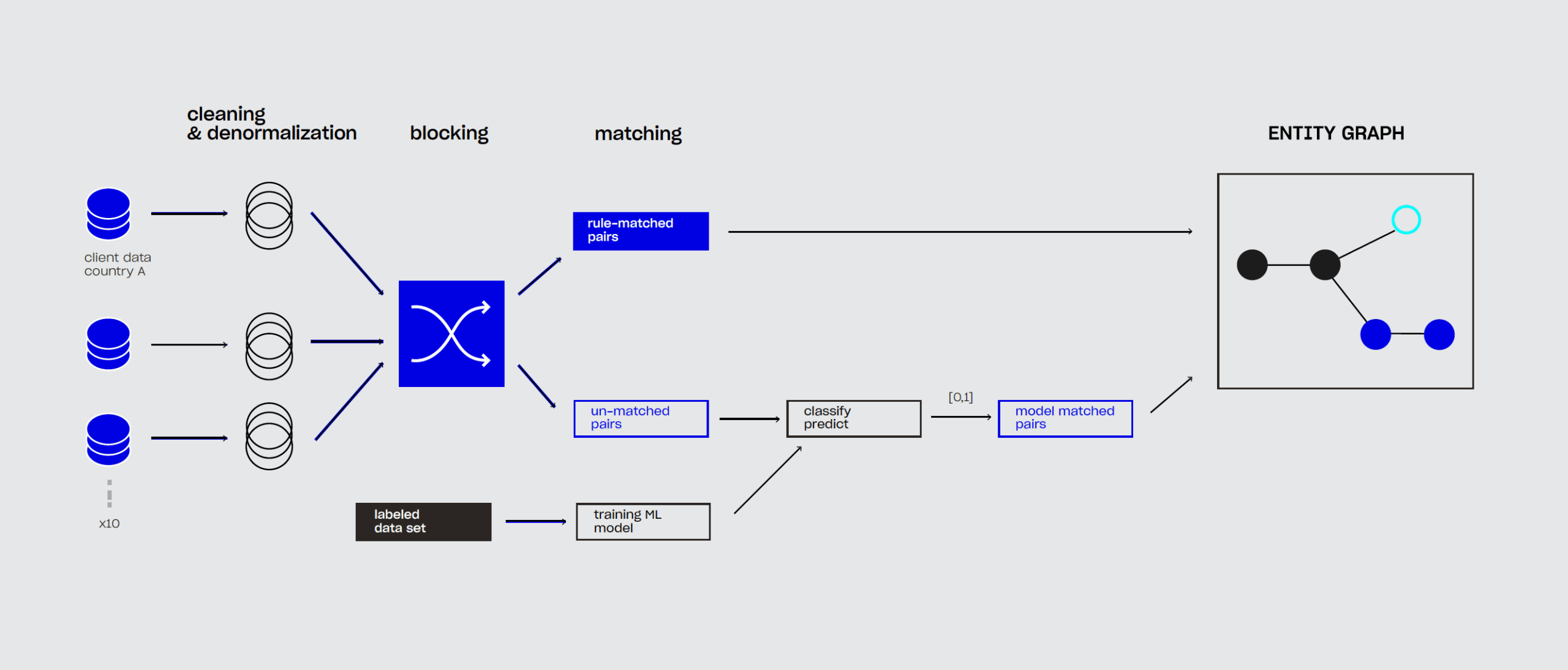

In other words, the banks require a single-client view, or a unified look at client and client risk across all systems. At the largest banks, solving the entity resolution problem requires aggregating and cross-referencing information from hundreds of IT systems that did not follow the same data standards and structures.

Normally, cleaning and joining this information would have required considerable manual review of client data. After all, incorrectly merging or consolidating two entities is just as dangerous as failing to link them.

AI and ML capabilities can significantly accelerate the entity resolution process by automatically surfacing likely matches and high-risk client profiles. Banks that implement these capabilities gain visibility on global customer activity and can accurately assess their risk exposure to money laundering and other high-risk financial activities (e.g., customers who have been issued too much credit).

Such solutions has already allowed the bank to detect previously unknown cases of terrorism financing, or cases in which bad actors tried to use fictitious or related identities to hide the true sources of funding.

Moving forward, an integrated view of client holdings, identity, and risk will enable banks to comply with or exceed regulator-issued guidance (e.g., BCBS 239/283) on anti-money laundering.

Key Elements for Success

Successful software implementation requires buy-in from across an organization and careful planning. Without a clear strategy and top-level support, it’s unlikely that a digital transformation project will get off the ground. At the same time, without adequate training and user adoption measures, even the most well-designed software is bound to fail.

The keys to a successful AI or ML deployment in financial services are:

1. A clear strategy and sustained executive support.

All stakeholders should agree on the project’s vision, goals, benchmarks, and success metrics.

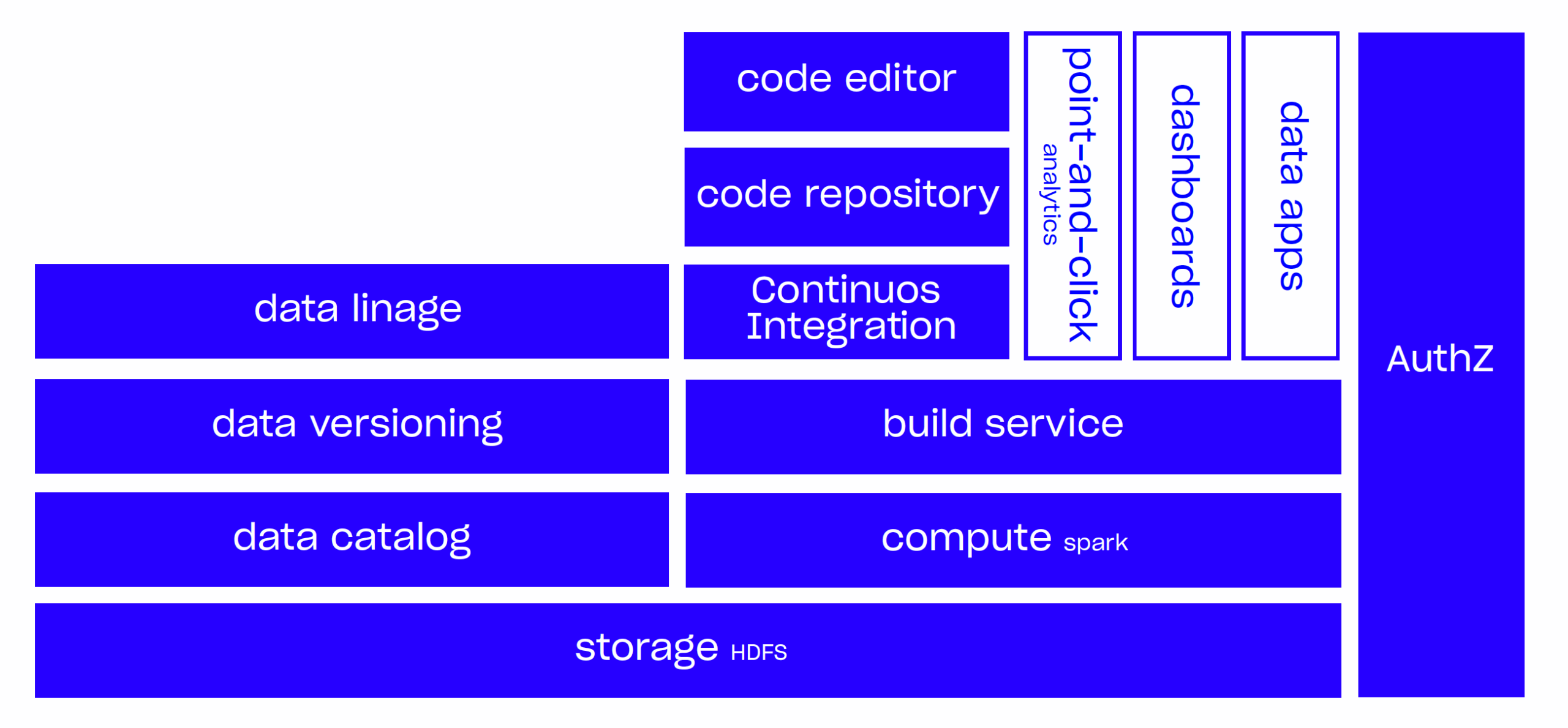

2. A strong data foundation.

A functioning data lake and well-planned data integration should be considered strategic investments. Otherwise, the project is unlikely to be successful.

3. Modern infrastructure and cloud as a data foundation.

Sophisticated data analytics require flexible storage and scalable computation resources. Ability to combine on prem infrastructure and public cloud is essential.

4. Specialized deployment skills and a demonstrated ability to deliver a successful outcome.

These are sometimes missing in-house, as personnel at financial services firm may lack experience delivering AI-based risk-reduction solutions. Partnering with 3rd party is viable option in this case.

5. A modern method of project planning and software iteration.

Agile-based and/or lean methodologies are best suited for the tasks described, as they allow for rapid deployment and operational flexibility when new challenges arise.

Data Foundations

Data Lake

One of the key problems large organisations face today is that their data is siloed, or spread across many systems not designed to talk to each other. Organisations often respond to this problem by developing data lakes, or central collections of data assets.

Unfortunately, these data lakes often remain fragmented and overly complex. Not only are they costly to implement, they fail to achieve the original goal of data unification, and they’re unsuitable as a foundation for AI technologies.

The key to collecting data from across an organisation and making it usable is to implement a central data platform. That doesn’t necessarily limit companies to any particular technology or set of tools. Rather, we use the term central data platform to refer to a system that’s been designed and implemented with the company’s longterm goals in mind, whether that means AI, risk reduction, or entity resolution.

Once those goals have been established, the system can then be designed for the correct form of data processing (e.g., structured, unstructured), real-time process requirements (e.g., batch processing vs. real- time processing), load management (i.e., anticipated user count), and other considerations. Making these decisions without first thinking about the end-goals of the system, on the other hand, is likely to lead to a costly and unusable data centralisation solution.

Data Pipelines and Entity Resolution

Simply collecting datasets in a single place doesn’t guarantee that data can be operationally useful. A bank’s datasets need to be cleaned, joined, and processed in a way that is repeatable over time.

The example of entity resolution, described above, is a good test of the sustainability of a bank’s data infrastructure. Is entity resolution an ongoing project, or a one-off that leaves banks exposed to risk as new individuals register for accounts and/or conduct financial transactions?

Ultimately, a bank’s data processing pipeline is only as useful as the data flowing through it. That’s why the repeatability and sustainability of data integration are key elements of a CRO’s successful digital transformation initiative across an institution.